In a previous blog entry I give some background around Erik Verlinde’s proposal for an emergent, thermodynamic basis of gravity. Gravity remains mysterious 100 years after Einstein’s introduction of general relativity – because it is so weak relative to the other main forces, and because there is no quantum mechanical description within general relativity, which is a classical theory.

One reason that it may be so weak is because it is not fundamental at all, that it represents a statistical, emergent phenomenon. There has been increasing research into the idea of emergent spacetime and emergent gravity and the most interesting proposal was recently introduced by Erik Verlinde at the University of Amsterdam in a paper “Emergent Gravity and the Dark Universe”.

A lot of work has been done assuming anti-de Sitter (AdS) spaces with negative cosmological constant Λ – just because it is easier to work under that assumption. This year, Verlinde extended this work from the unrealistic AdS model of the universe to a more realistic de Sitter (dS) model. Our runaway universe is approaching a dark energy dominated dS solution with a positive cosmological constant Λ.

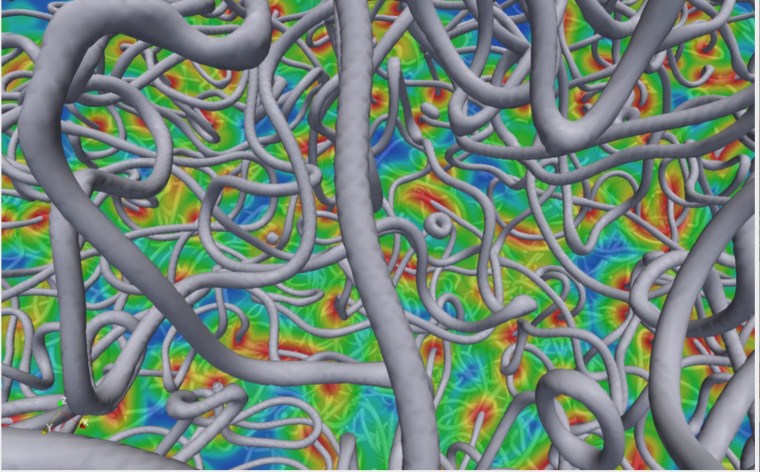

The background assumption is that quantum entanglement dictates the structure of spacetime, and its entropy and information content. Quantum states of entangled particles are coherent, observing a property of one, say the spin orientation, tells you about the other particle’s attributes; this has been observed in long distance experiments, with separations exceeding 100 kilometers.

If space is defined by the connectivity of quantum entangled particles, then it becomes almost natural to consider gravity as an emergent statistical attribute of the spacetime. After all, we learned from general relativity that “matter tells space how to curve, curved space tells matter how to move” – John Wheeler.

If space is defined by the connectivity of quantum entangled particles, then it becomes almost natural to consider gravity as an emergent statistical attribute of the spacetime. After all, we learned from general relativity that “matter tells space how to curve, curved space tells matter how to move” – John Wheeler.

What if entanglement tells space how to curve, and curved space tells matter how to move? What if gravity is due to the entropy of the entanglement? Actually, in Verlinde’s proposal, the entanglement entropy from particles is minor, it’s the entanglement of the vacuum state, of dark energy, that dominates, and by a very large factor.

One analogy is thermodynamics, which allows us to represent the bulk properties of the atmosphere that is nothing but a collection of a very large number of molecules and their micro-states. Verlinde posits that the information and entropy content of space are due to the excitations of the vacuum state, which is manifest as dark energy.

The connection between gravity and thermodynamics has been around for 3 decades, through research on black holes, and from string theory. Jacob Bekenstein and Stephen Hawking determined that a black hole possesses entropy proportional to its area divided by the gravitational constant G. String theory can derive the same formula for quantum entanglement in a vacuum. This is known as the AdS/CFT (conformal field theory) correspondence.

So in the AdS model, gravity is emergent and its strength, the acceleration at a surface, is determined by the mass density on that surface surrounding matter with mass M. This is just the inverse square law of Newton. In the more realistic dS model, the entropy in the volume, or bulk, must also be considered. (This is the Gibbs entropy relevant to excited states, not the Boltzmann entropy of a ground state configuration).

Newtonian dynamics and general relativity can be derived from the surface entropy alone, but do not reflect the volume contribution. The volume contribution adds an additional term to the equations, strengthening gravity over what is expected, and as a result, the existence of dark matter is ‘spoofed’. But there is no dark matter in this view, just stronger gravity than expected.

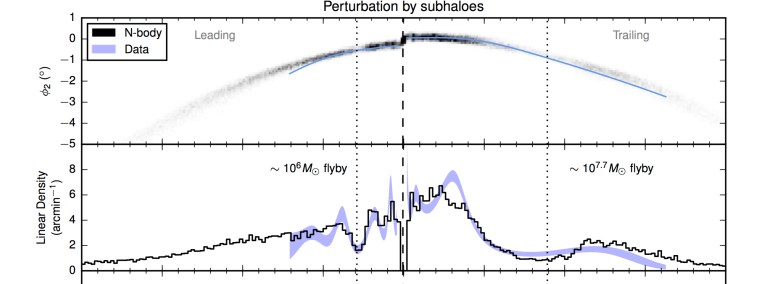

This is what the proponents of MOND have been saying all along. Mordehai Milgrom observed that galactic rotation curves go flat at a characteristic low acceleration scale of order 2 centimeters per second per year. MOND is phenomenological, it observes a trend in galaxy rotation curves, but it does not have a theoretical foundation.

Verlinde’s proposal is not MOND, but it provides a theoretical basis for behavior along the lines of what MOND states.

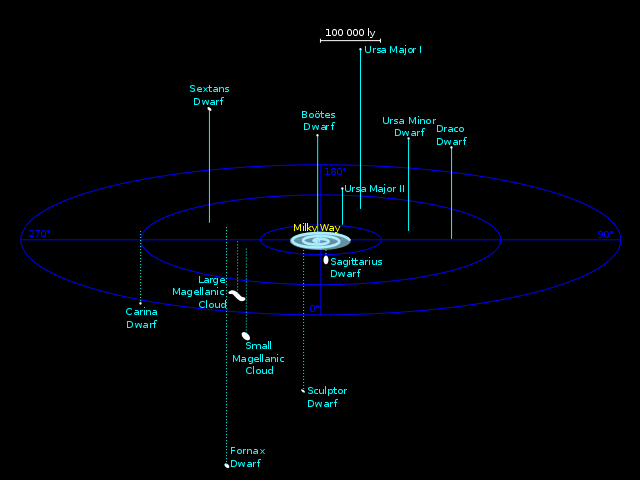

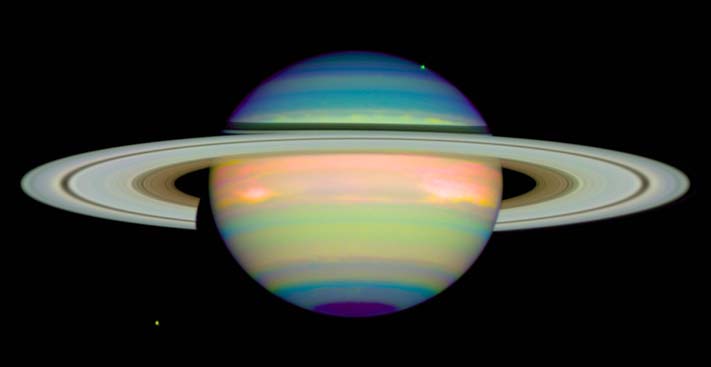

Now the volume in question turns out to be of order the Hubble volume, which is defined as c/H, where H is the Hubble parameter denoting the rate at which galaxies expand away from one another. Reminder: Hubble’s law is  where v is the recession velocity and the d the distance between two galaxies. The lifetime of the universe is approximately 1/H.

where v is the recession velocity and the d the distance between two galaxies. The lifetime of the universe is approximately 1/H.

The value of c / H is over 4 billion parsecs (one parsec is 3.26 light-years) so it is in galaxies, clusters of galaxies, and at the largest scales in the universe for which departures from general relativity (GR) would be expected.

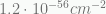

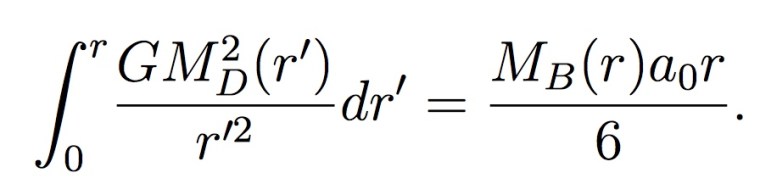

Dark energy in the universe takes the form of a cosmological constant Λ, whose value is measured to be  . Hubble’s parameter is

. Hubble’s parameter is  . A characteristic acceleration is thus H²/ sqrt(Λ) or

. A characteristic acceleration is thus H²/ sqrt(Λ) or  cm per sec per sec (cm = centimeters, sec = second).

cm per sec per sec (cm = centimeters, sec = second).

One can also define a cosmological acceleration scale simply by  , the value for this is about

, the value for this is about  cm per sec per sec (around 2 cm per sec per year), and is about 15 billion times weaker than Earth’s gravity at its surface! Note that the two estimates are quite similar.

cm per sec per sec (around 2 cm per sec per year), and is about 15 billion times weaker than Earth’s gravity at its surface! Note that the two estimates are quite similar.

This is no coincidence since we live in an approximately dS universe, with a measured Λ ~ 0.7 when cast in terms of the critical density for the universe, assuming the canonical ΛCDM cosmology. That’s if there is actually dark matter responsible for 1/4 of the universe’s mass-energy density. Otherwise Λ could be close to 0.95 times the critical density. In a fully dS universe,  , so the two estimates should be equal to within

, so the two estimates should be equal to within  which is approximately the difference in the two estimates.

which is approximately the difference in the two estimates.

So from a string theoretic point of view, excitations of the dark energy field are fundamental. Matter particles are bound states of these excitations, particles move freely and have much lower entropy. Matter creation removes both energy and entropy from the dark energy medium. General relativity describes the response of area law entanglement of the vacuum to matter (but does not take into account volume entanglement).

Verlinde proposes that dark energy (Λ) and the accelerated expansion of the universe are due to the slow rate at which the emergent spacetime thermalizes. The time scale for the dynamics is 1/H and a distance scale of c/H is natural; we are measuring the time scale for thermalization when we measure H. High degeneracy and slow equilibration means the universe is not in a ground state, thus there should be a volume contribution to entropy.

When the surface mass density falls below  things change and Verlinde states the spacetime medium becomes elastic. The effective additional ‘dark’ gravity is proportional to the square root of the ordinary matter (baryon) density and also to the square root of the characteristic acceleration

things change and Verlinde states the spacetime medium becomes elastic. The effective additional ‘dark’ gravity is proportional to the square root of the ordinary matter (baryon) density and also to the square root of the characteristic acceleration  .

.

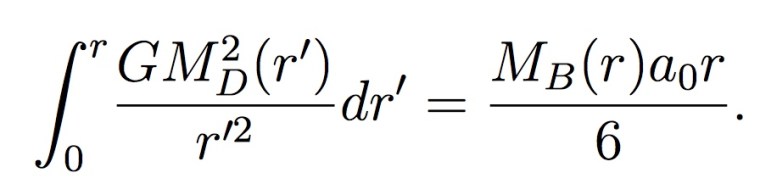

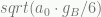

This dark gravity additional acceleration satisfies the equation  , where

, where  is the usual Newtonian acceleration due to baryons and

is the usual Newtonian acceleration due to baryons and  is the dark gravity characteristic acceleration. The total gravity is

is the dark gravity characteristic acceleration. The total gravity is  . For large accelerations this reduces to the usual

. For large accelerations this reduces to the usual  and for very low accelerations it reduces to

and for very low accelerations it reduces to  .

.

The value  at

at  cm per sec per sec derived from first principles by Verlinde is quite close to the MOND value of Milgrom, determined from galactic rotation curve observations, of

cm per sec per sec derived from first principles by Verlinde is quite close to the MOND value of Milgrom, determined from galactic rotation curve observations, of  cm per sec per sec.

cm per sec per sec.

So suppose we are in a region where  is only

is only  cm per sec per sec. Then

cm per sec per sec. Then  takes the same value and the gravity is just double what is expected. Since orbital velocities go as the square of the acceleration then the orbital velocity is observed to be

takes the same value and the gravity is just double what is expected. Since orbital velocities go as the square of the acceleration then the orbital velocity is observed to be  higher than expected.

higher than expected.

In terms of gravitational potential, the usual Newtonian potential goes as 1/r, resulting in a  force law, whereas for very low accelerations the potential now goes as

force law, whereas for very low accelerations the potential now goes as  and the resultant force law is 1/r. We emphasize that while the appearance of dark matter is spoofed, there is no dark matter in this scenario, the reality is additional dark gravity due to the volume contribution to the entropy (that is displaced by ordinary baryonic matter).

and the resultant force law is 1/r. We emphasize that while the appearance of dark matter is spoofed, there is no dark matter in this scenario, the reality is additional dark gravity due to the volume contribution to the entropy (that is displaced by ordinary baryonic matter).

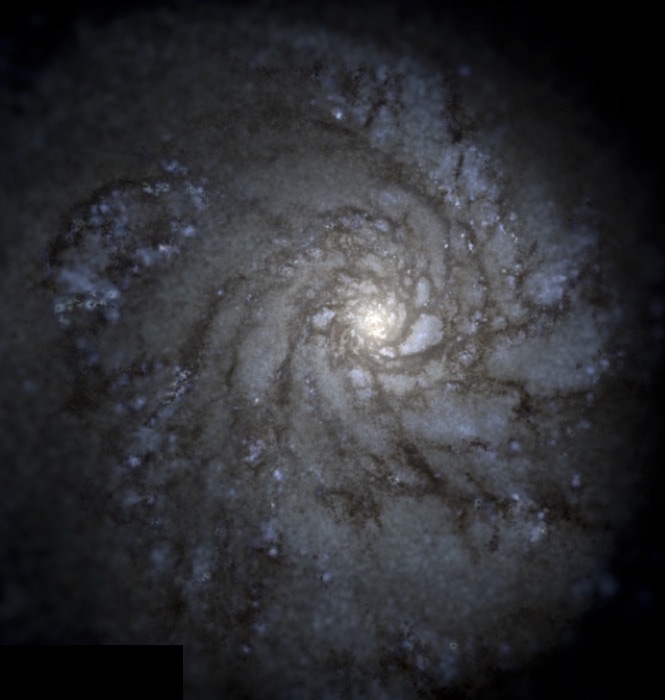

Flat to rising rotation curve for the galaxy M33

Dark matter was first proposed by Swiss astronomer Fritz Zwicky when he observed the Coma Cluster and the high velocity dispersions of the constituent galaxies. He suggested the term dark matter (“dunkle materie”). Harold Babcock in 1937 measured the rotation curve for the Andromeda galaxy and it turned out to be flat, also suggestive of dark matter (or dark gravity). Decades later, in the 1970s and 1980s, Vera Rubin (just recently passed away) and others mapped many rotation curves for galaxies and saw the same behavior. She herself preferred the idea of a deviation from general relativity over an explanation based on exotic dark matter particles. One needs about 5 times more matter, or about 5 times more gravity to explain these curves.

Verlinde is also able to derive the Tully-Fisher relation by modeling the entropy displacement of a dS space. The Tully-Fisher relation is the strong observed correlation between galaxy luminosity and angular velocity (or emission line width) for spiral galaxies,  . With Newtonian gravity one would expect

. With Newtonian gravity one would expect  . And since luminosity is essentially proportional to ordinary matter in a galaxy, there is a clear deviation by a ratio of v².

. And since luminosity is essentially proportional to ordinary matter in a galaxy, there is a clear deviation by a ratio of v².

Apparent distribution of spoofed dark matter, for a given ordinary (baryonic) matter distribution

When one moves to the scale of clusters of galaxies, MOND is only partially successful, explaining a portion, coming up shy a factor of 2, but not explaining all of the apparent mass discrepancy. Verlinde’s emergent gravity does better. By modeling a general mass distribution he can gain a factor of 2 to 3 relative to MOND and basically it appears that he can explain the velocity distribution of galaxies in rich clusters without the need to resort to any dark matter whatsoever.

And, impressively, he is able to calculate what the apparent dark matter ratio should be in the universe as a whole. The value is  where

where  is the apparent mass-energy fraction in dark matter and

is the apparent mass-energy fraction in dark matter and  is the actual baryon mass density fraction. Both are expressed normalized to the critical density determined from the square of the Hubble parameter,

is the actual baryon mass density fraction. Both are expressed normalized to the critical density determined from the square of the Hubble parameter,  .

.

Plugging in the observed  one obtains

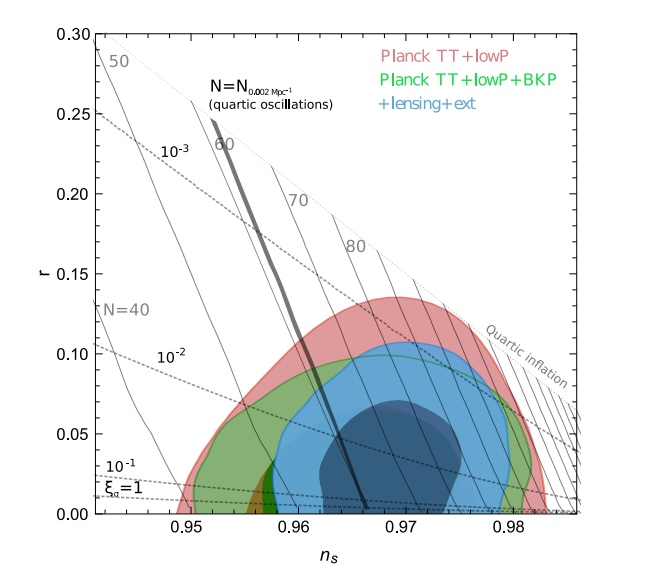

one obtains  , very close to the observed value from the cosmic microwave background observations. The Planck satellite results have the proportions for dark energy, dark matter, ordinary matter as .68, .27, and .05 respectively, assuming the canonical ΛCDM cosmology.

, very close to the observed value from the cosmic microwave background observations. The Planck satellite results have the proportions for dark energy, dark matter, ordinary matter as .68, .27, and .05 respectively, assuming the canonical ΛCDM cosmology.

The main approximations Verlinde makes are a fully dS universe and an isolated, static (bound) system with a spherical geometry. He also does not address the issue of galaxy formation from the primordial density perturbations. At first guess, the fact that he can get the right universal  suggests this may not be a great problem, but it requires study in detail.

suggests this may not be a great problem, but it requires study in detail.

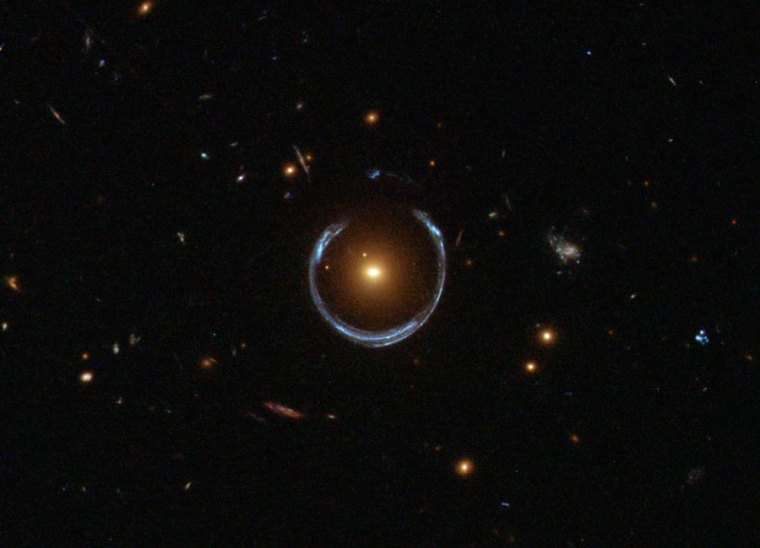

Breaking News!

Margot Brouwer and co-researchers have just published a test of Verlinde’s emergent gravity with gravitational lensing. Using a sample of over 33,000 galaxies they find that general relativity and emergent gravity can provide an equally statistically good description of the observed weak gravitational lensing. However, emergent gravity does it with essentially no free parameters and thus is a more economical model.

“The observed phenomena that are currently attributed to dark matter are the consequence of the emergent nature of gravity and are caused by an elastic response due to the volume law contribution to the entanglement entropy in our universe.” – Erik Verlinde

References

Erik Verlinde 2011 “On the Origin of Gravity and the Laws of Newton” arXiv:1001.0785

Stephen Perrenod, 2013, 2nd edition, “Dark Matter, Dark Energy, Dark Gravity” Amazon, provides the traditional view with ΛCDM (read Dark Matter chapter with skepticism!)

Erik Verlinde 2016 “Emergent Gravity and the Dark Universe arXiv:1611.02269v1

Margot Brouwer et al. 2016 “First test of Verlinde’s theory of Emergent Gravity using Weak Gravitational Lensing Measurements” arXiv:1612.03034v

times the dark energy density.

centimeters/second/second for the observed value of the Hubble parameter. The Newtonian acceleration at Saturn is .006 centimeters/second/second or 50,000 times larger.

If space is defined by the connectivity of quantum entangled particles, then it becomes almost natural to consider gravity as an emergent statistical attribute of the spacetime. After all, we learned from general relativity that “matter tells space how to curve, curved space tells matter how to move” – John Wheeler.

If space is defined by the connectivity of quantum entangled particles, then it becomes almost natural to consider gravity as an emergent statistical attribute of the spacetime. After all, we learned from general relativity that “matter tells space how to curve, curved space tells matter how to move” – John Wheeler.